Agentic AI Pindrop Anonybit

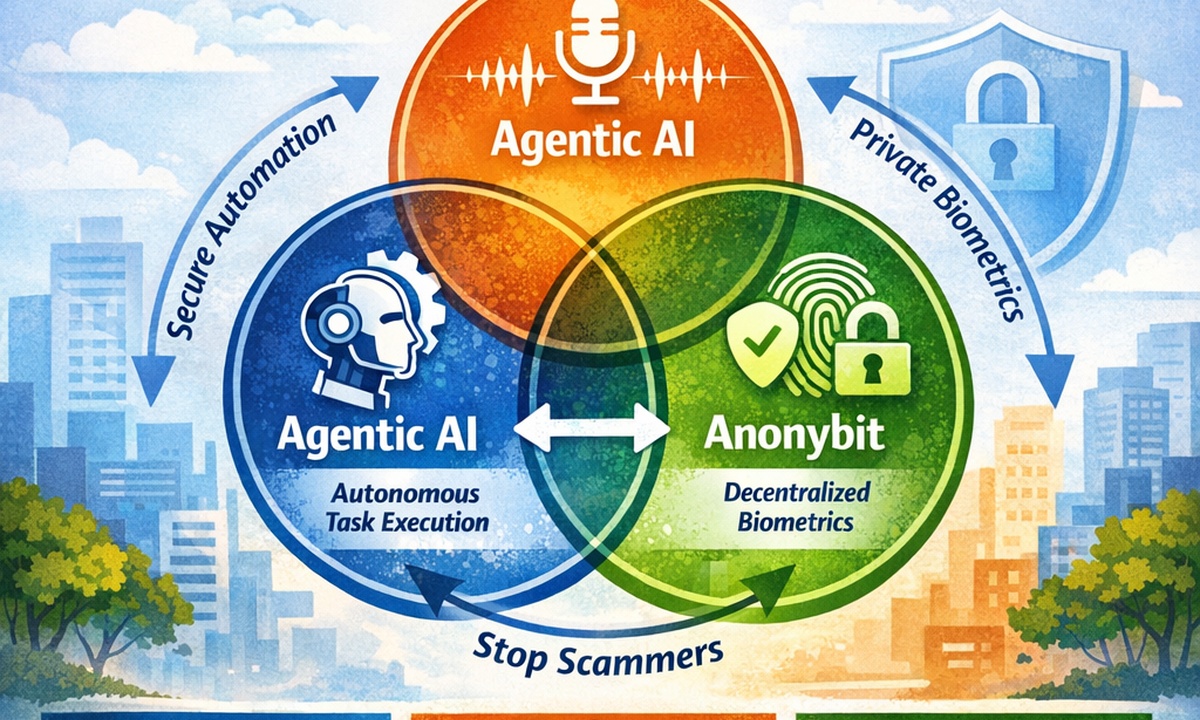

If you keep hearing agentic ai pindrop anonybit, you are not alone. People are trying to connect three ideas that feel separate at first. Agentic AI is about software agents that can plan, act, and finish tasks with less hand-holding. Pindrop is known for voice security in contact centers, with fraud and deepfake detection built around call audio signals. Anonybit focuses on privacy-preserving biometrics, built to reduce risk that comes from storing raw biometric data in one place.

Here is the simple link: agentic systems need identity they can trust, and voice channels are now a top target for synthetic fraud. A real person can get tricked by a fake voice. A software agent can get tricked even faster if identity checks are weak. That’s why this topic matters. In this guide, you’ll learn what each part does, how they fit together, what real risks show up, and how teams can build a safer flow that still feels smooth for customers.

Profile Table: Quick Snapshot (All in One Place)

| Item | What it is | Best known for | Where it shows up | Core value |

|---|---|---|---|---|

| Agentic AI | Goal-driven AI that can plan and act with limited supervision | Autonomous task execution and tool use | Support ops, security ops, workflows | Speed with guardrails |

| Pindrop | Voice security company | Voice fraud detection and deepfake defense | Contact centers, IVR, phone-based verification | Trust signals from voice + call data |

| Anonybit | Biometrics and identity security company | Privacy-preserving biometric authentication | Digital onboarding, help desk, workforce access | Safer biometric storage + use across channels |

What Agentic AI Pindrop Anonybit Means in Real Life

Agentic AI is a style of AI that can take a goal and move toward it with fewer step-by-step prompts. Think: “Resolve this customer issue,” or “Investigate this suspicious login,” and the system breaks it into steps, uses tools, checks results, then continues. IBM describes agentic AI as systems that can accomplish goals with limited supervision, often using multiple agents that handle parts of a larger job. AWS explains it as AI that can act independently toward goals, not just respond with text.

This matters because it changes how security failures happen. A normal chatbot may give a wrong answer. An agent can do a wrong action, like resetting access, changing account details, or approving a risky request. So identity becomes the “front door.” If the front door check is weak, agentic speed becomes agentic damage. That is why people tie agentic ai pindrop anonybit together. They want autonomy, but they also need reliable identity checks, audit trails, and privacy rules that hold up under pressure.

Why Voice Is Now a High-Risk Channel

Phone support feels old-school, but it still moves real money. Banks, insurance, telecom, retail, and healthcare handle account changes and recovery through calls. Attackers know that. Deepfake voices and synthetic speech can mimic real people well enough to fool humans, agents, and basic voice checks. Pindrop’s own material positions deepfake detection as a way to distinguish human callers from synthetic audio early in a call by analyzing speech characteristics.

Pindrop’s 2025 Voice Intelligence + Security Report highlights large-scale fraud pressure and ties it to AI-driven tactics. Even if you never read a report, you can feel the trend: scammers don’t need to steal a password if they can talk their way into an account reset. Voice becomes the shortcut. Once that happens, the attacker can grab OTPs, change email addresses, switch payout details, and lock the real user out. Any system that relies on “the call sounded normal” is playing a risky game. This is the exact spot where agentic ai pindrop anonybit becomes a practical conversation, not buzzwords.

How Each Component Works Together

The Shared Problem: Trust at “Decision Time”

Here is the moment that matters most: the instant a system decides “yes, this is the right person,” or “yes, this agent is allowed to do this,” or “yes, this request is safe.” In many companies, those moments happen in messy places: a phone call, a help desk ticket, a chat, or a rushed internal request.

Agentic AI raises the stakes because decision time happens faster and more often. A human might do 10 risky actions per day. An agent can do 10 in a minute. Without strong checks, it can approve things that look normal on the surface. With better checks, it can refuse risky actions and route them to safer flows. This is the practical frame for agentic ai pindrop anonybit:

When you connect them, you get a safer path for automation.

A Simple “Trio Workflow” You Can Picture

Let’s turn this into a clear mental model you can reuse. Imagine a customer calls to change their payout bank account. That is a high-risk action. A basic system might ask for DOB and last four digits. Fraud teams know that data is often leaked.

Pindrop flags a risk score and checks for synthetic voice signals early in the call

If risk is low, the request moves forward with normal friction

If risk is high, the system triggers step-up verification

Uses privacy-preserving biometric confirmation through a secure identity layer that doesn’t expose raw biometric data

Guides the agent through the policy: what to ask, what to verify, what to log, and what to deny

That is agentic ai pindrop anonybit in action: voice risk signals + privacy-safe identity checks + automation that follows a controlled policy.

What Makes Agentic AI Risky Without Guardrails

Agentic AI can fail in ways that feel surprising. The system may pick the wrong tool, pull the wrong data, or take an action too early. In enterprise settings, this creates issues tied to transparency, auditability, and clear boundaries between what the AI can access and what it can change.

The danger is not “AI is bad.” The danger is ungoverned autonomy. A safe agentic system needs:

If you connect identity signals to those guardrails, the agent can behave more responsibly. Voice trust signals from Pindrop can raise the risk level. Privacy-first biometrics patterns from Anonybit can support step-up checks without forcing risky data storage choices. This is why agentic ai pindrop anonybit is not only a tech trend; it’s a design pattern for safer automation.

Leadership Snapshots

Role: Co-Founder, CEO & CTO of Pindrop

Known for: Work on telecommunications security; frequent speaker on phone fraud threats

Education: PhD in Computer Science from Georgia Institute of Technology

Company focus: Voice security: fraud detection, deepfake defense, call risk insights

Role: Co-Founder & CEO of Anonybit

Known for: Work across biometrics, digital identity, fintech, cybersecurity; privacy advocacy

Company focus: Privacy-preserving biometrics and decentralized biometric storage framework

Practical aim: Strong authentication without central biometric “honeypots”

Where This Matters Most (Top Use Cases)

You will see the biggest impact in places where identity is messy and fraud is expensive. Contact centers are the obvious one. Pindrop positions its solutions around fraud detection, IVR protection, and deepfake defense in voice channels.

Other high-value use cases:

Anonybit describes enterprise and insurance use cases where privacy-preserving biometrics can support secure access and service interactions across channels. Now connect agentic AI: it can orchestrate steps, check policy rules, create audit logs, and reduce manual effort. The combined theme returns again: agentic ai pindrop anonybit turns identity from a single check into a smarter flow.

The “Privacy vs Security” Trap (And How to Avoid It)

Many teams think they must pick one: strong biometrics or strong privacy. That mindset creates bad designs. Strong identity can exist with privacy-safe storage and processing patterns. Anonybit’s messaging leans into that idea by emphasizing decentralized biometrics and privacy-by-design.

A healthier way to think:

If you store biometric data centrally, a breach becomes a long-term brand problem. If you refuse biometrics completely, you might rely on weak knowledge checks that criminals can bypass. Voice security fits here too. Voice is sensitive data, and deepfake attacks raise the fraud risk. A good agentic ai pindrop anonybit design tries to reduce fraud without creating a new data bomb that can explode later.

How Teams Can Start Without Overbuilding

You do not need a giant rebuild on day one. Most teams can start with a focused “high-risk action” list. Pick the top 5 actions that cause real loss: payout changes, password resets, address changes, SIM swaps, account recovery, admin access grants.

Then apply a tiered approach:

Pindrop-style call risk and deepfake signals can help decide the tier in real time. Anonybit-style privacy-preserving biometrics can support step-up checks without pushing you into risky data storage choices. Agentic AI can glue the steps together and document what happened. That is agentic ai pindrop anonybit as a practical rollout plan, not a big-bang project.

FAQs (Clear Answers)

What does “agentic ai pindrop anonybit” mean in one line?

Can agentic AI run customer verification by itself?

What problem does Pindrop focus on most?

Why not store biometrics in one big database?

Is voice a biometric?

What is the safest way to start with this trio?

Conclusion: Build Trust That Keeps Up With AI

Automation is moving fast. Fraud is moving faster. If your business depends on calls, help desk support, or account recovery, trust is not a “nice extra.” It is the whole game. The combined idea behind agentic ai pindrop anonybit is simple: let agentic AI handle speed, let voice security spot synthetic threats, and let privacy-preserving biometrics keep identity strong without creating risky data piles.

If you want a smart next step, write down your top 5 fraud and takeover scenarios. Then map where identity checks fail. Once you see the weak points, it becomes much easier to design a safer flow that still feels easy for real users.